Harish Haresamudram

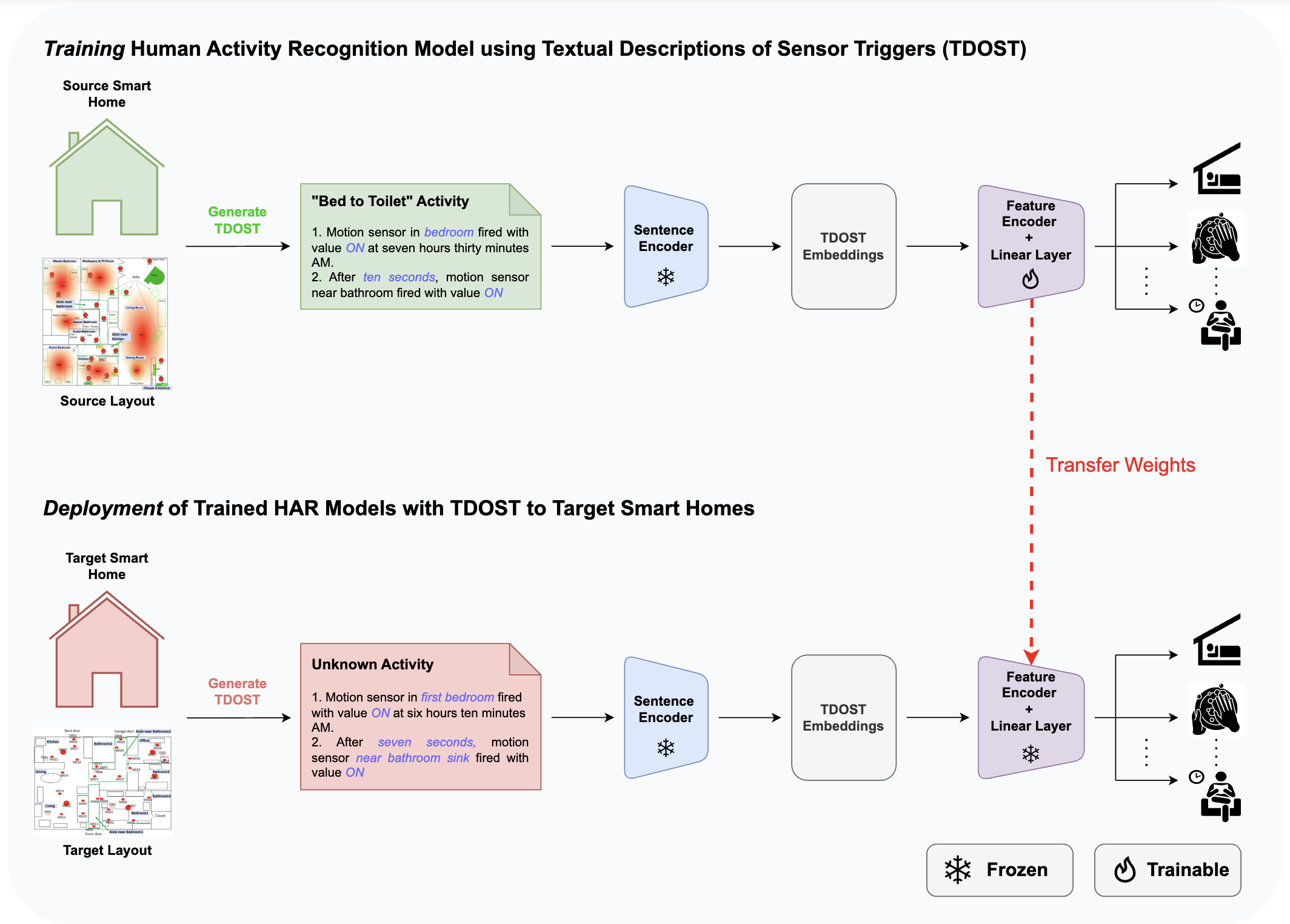

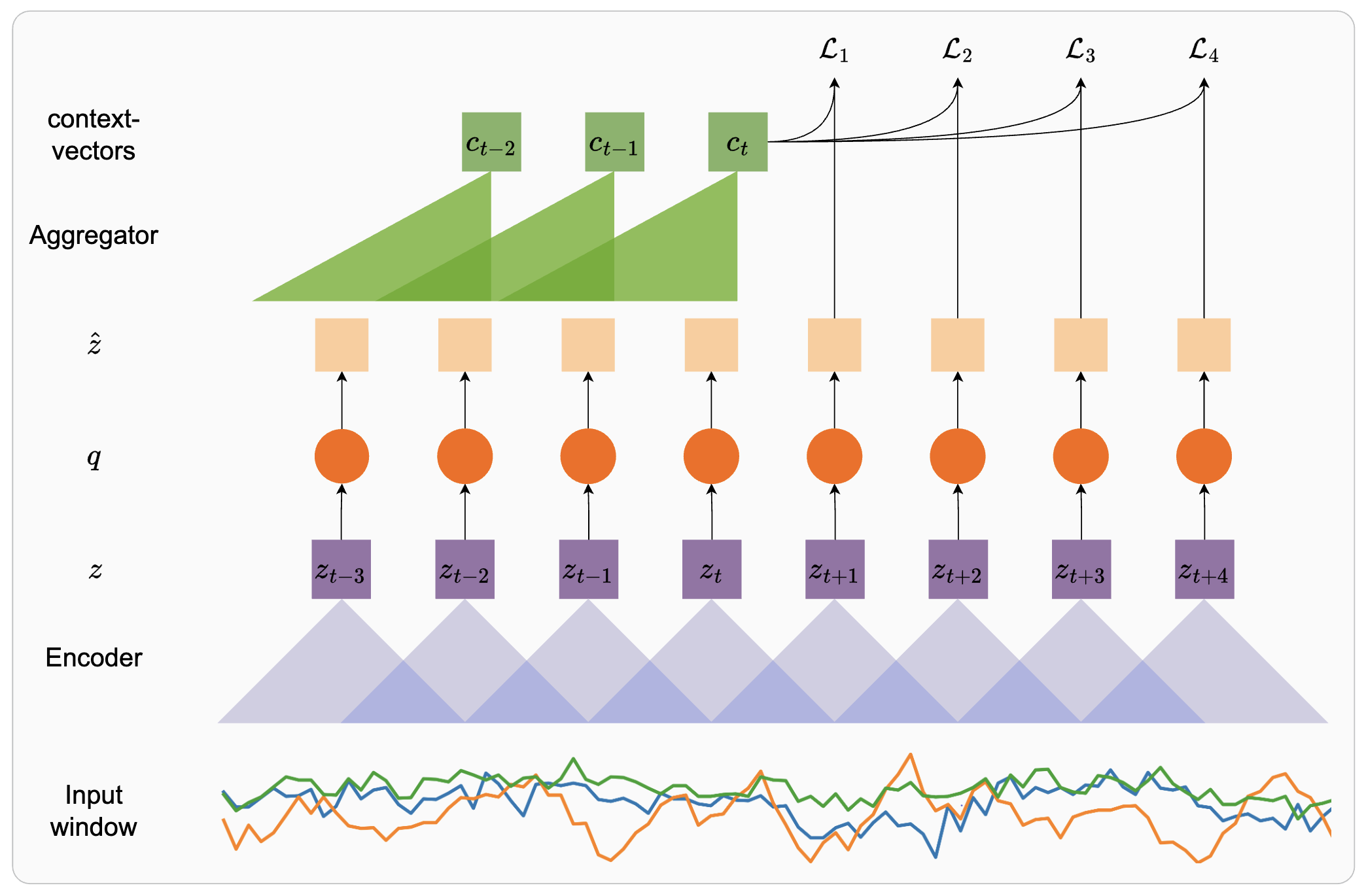

I am a Postdoctoral Research Associate at the Grainger College of Engineering, University of Illinois Urbana-Champaign, working under the mentorship of Prof. Jim Rehg. I obtained my PhD from Georgia Institute of Technology, where I was advised by Prof. Thomas Ploetz and Prof. Irfan Essa. My dissertation involved the design and development of representation learning approaches for wearable sensors, e.g., accelerometers and gyroscopes, for tasks such as Human Activity Recognition (HAR) and behavior analysis. I have been supported by funding from the National Science Foundation (through the AI-CARING Institute), Optum AI, and Google.

Research

My research broadly involves learning representations for time-series data, with a focus on developing techniques that require minimal supervision. I develop self-supervised and multi-modal learning algorithms for data from wearable sensors, e.g., accelerometers and PPG. Subsequently, I use such representations to analyse health and well-being as well as human behavior.

News

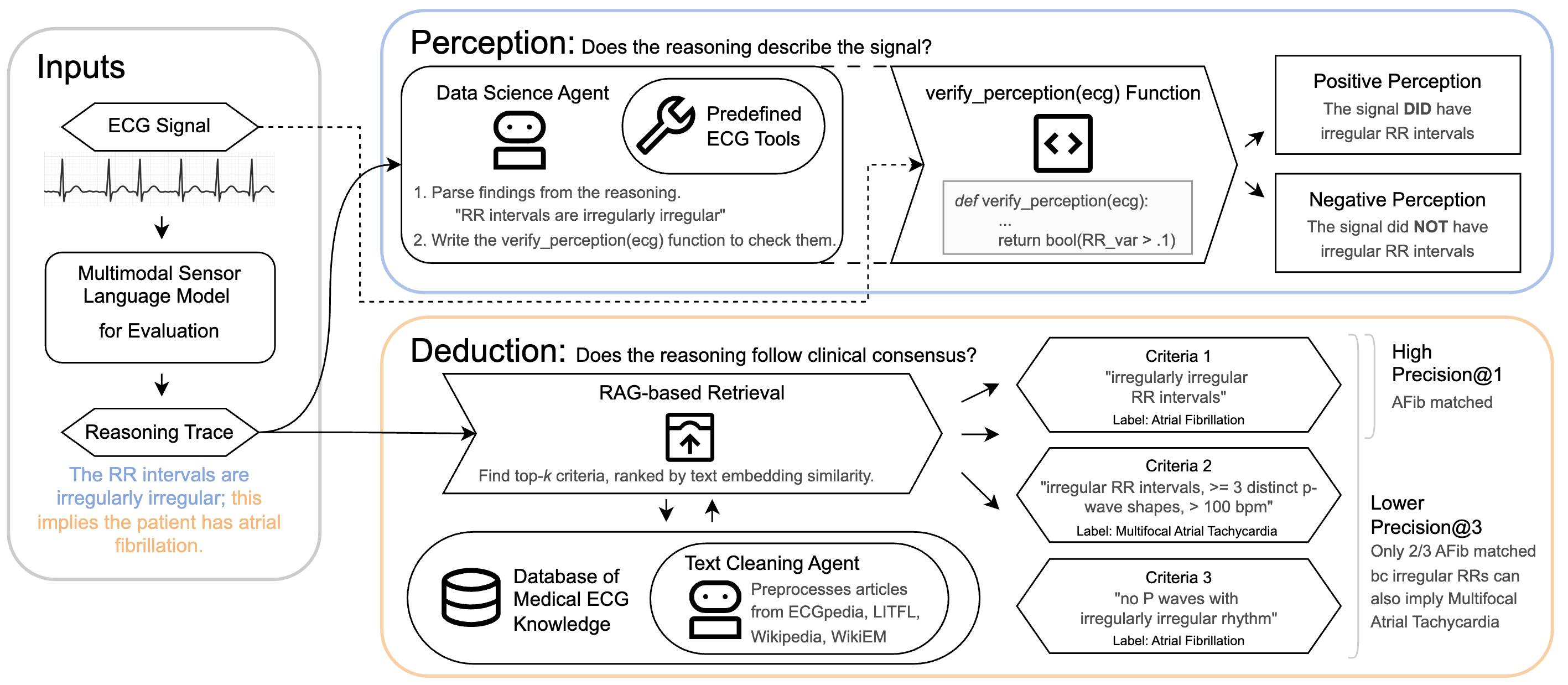

| Mar 06, 2026 | Our new paper: How Well Do Multimodal Models Reason on ECG Signals? is now available on Arxiv! We introduce a reproducible framework for evaluating reasoning capablities of Multimodal LLMs on ECG signals. |

|---|---|

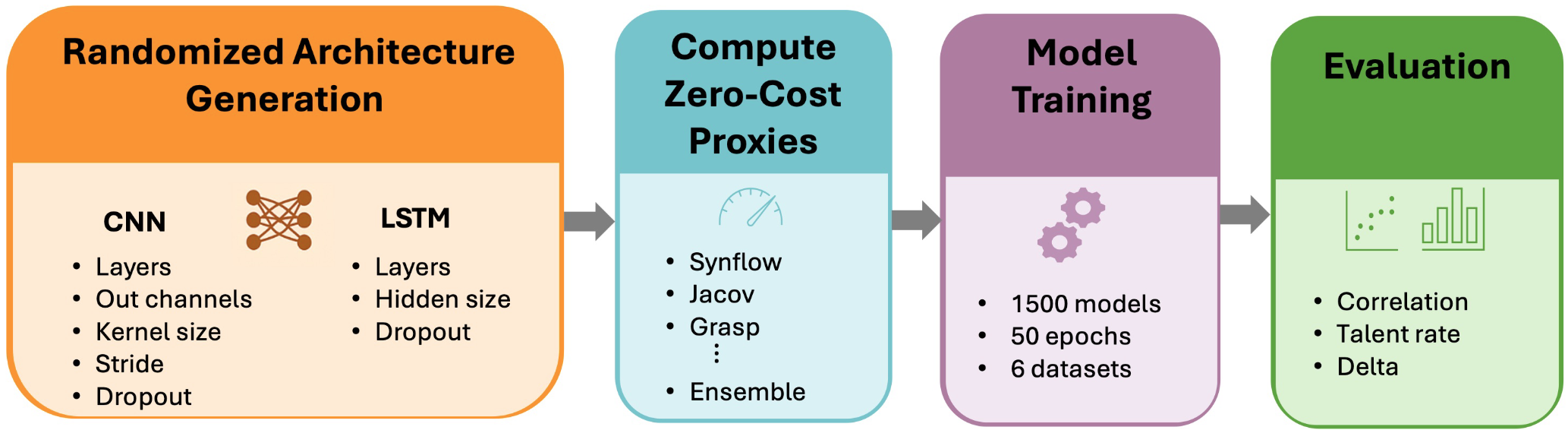

| Nov 19, 2025 | Our paper: Models Got Talent: Identifying High Performing Wearable Human Activity Recognition Models Without Training is now on Arxiv! We evaluate whether activity recognition performance can be effectively predicted without doing any training, leading to lightweight NAS. |

| Oct 15, 2025 | Honored to be the runner-up for the Gaetano Boriello Outstanding Award at Ubicomp 2025. |

| Oct 12, 2025 | Organized the GenAI4HS workshop at Ubicomp 2025! Great to see a packed session and interesting discussions! |

| Oct 01, 2025 | I joined as a Post Doctoral Research Associate in Prof. Jim Rehg’s lab at UIUC! Excited for what is to come! |

Selected publications

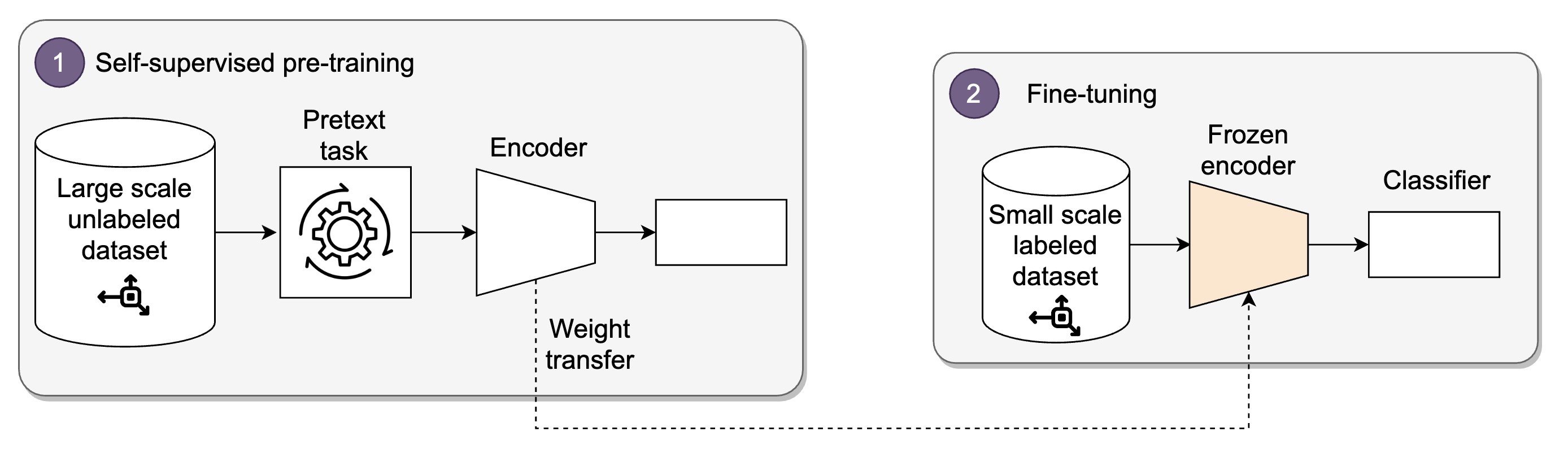

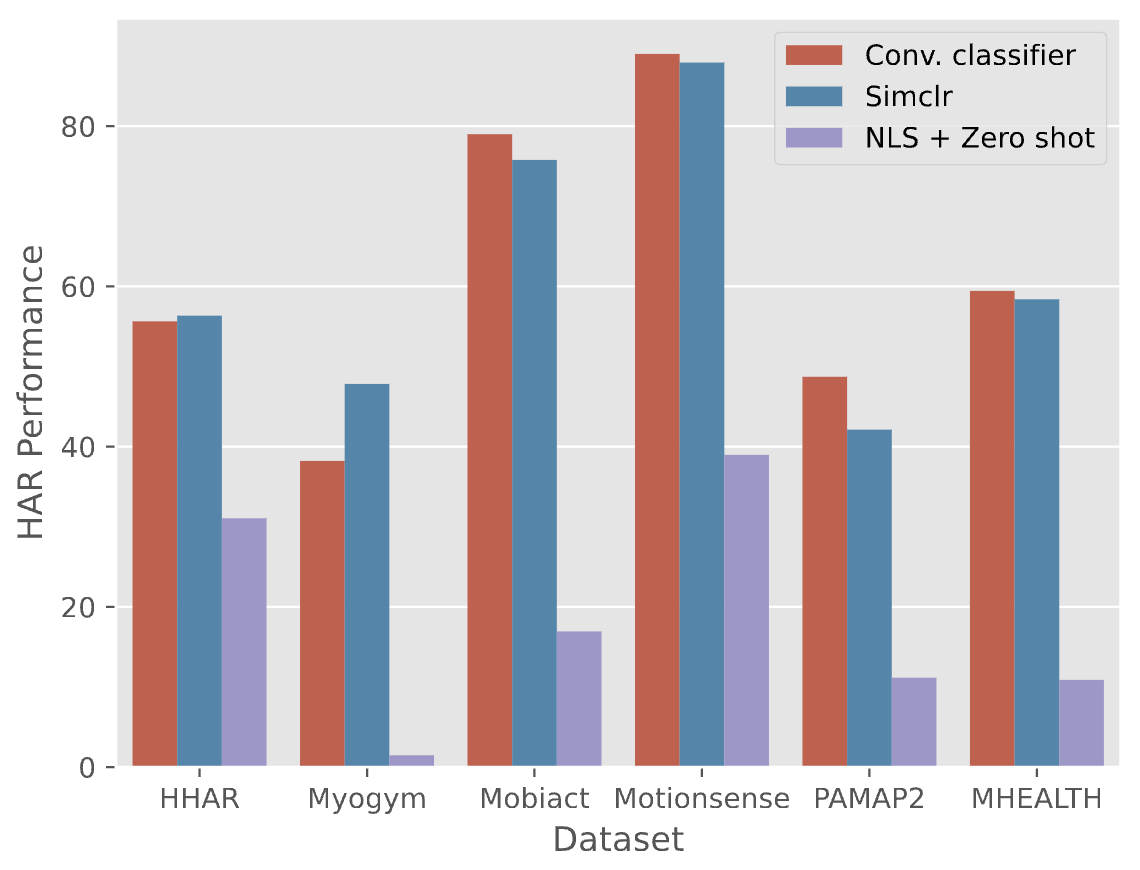

Towards Learning Discrete Representations via Self-Supervision for Wearables-Based Human Activity RecognitionSensors, 2024

Towards Learning Discrete Representations via Self-Supervision for Wearables-Based Human Activity RecognitionSensors, 2024